Here’s a link to a paper called “Escape From Model-Land”. It’s written by an economist/modeler at the London School of Economics and a mathematician/statistician at Pembroke College, Oxford and LSE.

From the abstract:

The authors present a short guide to some of the temptations and pitfalls of model-land, some directions towards the exit, and two ways to escape. Their aim is to improve decision support by providing relevant, adequate information regarding the real-world target of interest, or making it clear why today’s model models are not up to that task for the particular target of interest.

I like how the authors (Idea 1) distinguish between “weather-like” tasks, and “climate-like” tasks. In our world, a “weather-like task” might be a growth and yield or fire behavior model, for which more real-world data is always available to improve the model (that is, if there is a feedback process and someone whose job it is to care for the model). Model upkeep, however, it not as scientifically cool as model development, so there’s that.

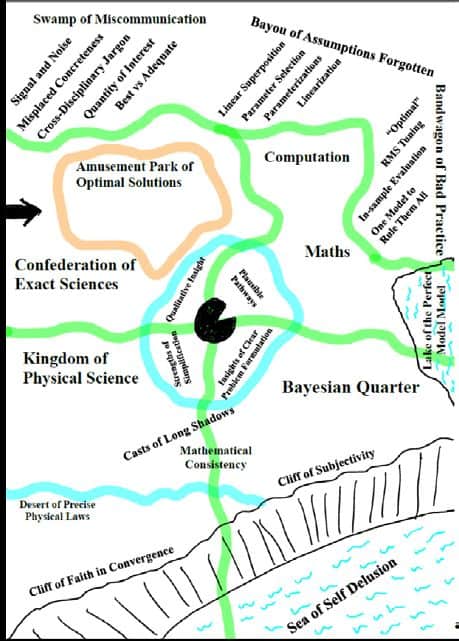

Our image of model land is intended to illustrate Whitehead’s (1925) “Fallacy of Misplaced Concreteness”. Whitehead (1925) reminds us that “it is of the utmost importance to be vigilant in critically revising your modes of abstraction”. Since obviously the “disadvantage of exclusive attention to a group of abstractions, however well-founded, is that, by the nature of the case, you have abstracted from the remainder of things”. Model-land encompasses the group of abstractions that our model is made of, the real-world includes the remainder of things.

Big Surprises, for example, arise when something our simulation models cannot mimic turns out to have important implications for us. Big Surprises invalidate (not update) model-based probability forecasts: the conditions I in any conditional probability P(x|I) changes. In “weather-like” tasks, where there are many opportunities to test the outcome of our model against a real observed outcome, we can see when/how our models become silly (though this does not eliminate every possibility of a Big Surprise). In “climate-like” tasks, where the forecasts are made truly out-of-sample, there is no such opportunity and we rely on judgements about the quality of the model given the degree to which it performs well under different conditions.In economics, forecasting the closing value of an exchange rate or of Brent Crude is a weather-like task: the same mathematical forecasting system can be used for hundreds or thousands of forecasts, and thus a large forecast-outcome archive can be obtained. Weather forecasts fall into this category; a “weather model” forecast system produces forecasts every 6 hours for, say, 5 years. In climate-like tasks there may be only one forecast: will the explosion of a nuclear bomb ignite and burn off the Earth’s atmosphere (this calculation was actually made)? How will the euro respond if Greece leaves the Eurozone? The pound? Or the system may change so much before we again address the same question that the relevant models are very different, as in year-ahead GDP forecasting, or forecasting inflation, or the hottest (or wettest) day of the year 2099 in the Old Quad of Pembroke College, Oxford.

……

(Idea 2). The unpredictable or Big Surprise. People working with “weather-like” tasks may develop a high level of humility regarding the many unknowns small and Big that can happen in the real world. It’s possible that people working with “climate-like” tasks have fewer opportunities to develop that appreciation, or perhaps that they must trudge forward knowing about known unknowns and unknown unknowns. The authors’ discussion of the difference between how economists use model outputs compared to climate modelers (intervening expert judgement)is also interesting.

And the questions we asked in the last post:

“It is helpful to recognise a few critical distinctions regarding pathways out of model-land and back to reality. Is the model used simply the “best available” at the present time, or is it arguably adequate for the specific purpose of interest? How would adequacy for purpose be assessed, and what would it look like? Are you working with a weather-like task, where adequacy for purpose can more or less be quantified, or a climate-like task, where relevant forecasts cannot be evaluated fully? Mayo (1996) argues that severe testing is required to build confidence in a model (or theory); we agree this is an excellent method for developing confidence in weather-like tasks, but is it possible to construct severe tests for extrapolation (climate-like) tasks? Is the system reflexive; does it respond to the forecasts themselves? How do we evaluate models: against real-world variables, or against a contrived index, or against other models? Or are they primarily evaluated by means of their epistemic or physical foundations? Or, one step further, are they primarily explanatory models for insight and under-standing rather than quantitative forecast machines? Does the model in fact assist with human understanding of the system, or is it so complex that it becomes a prosthesis of understanding in itself?

“A prosthesis of understanding” (for prosthesis, I think “substitute”) reminds me of linear programming models (most notably, in our case, Forplan).

Speaking of FORPLAN, older readers (and younger historians) might enjoy Randal O’Toole’s memoirs, which he is serializing as a part of his Antiplanner blog. His muses include deconstructing FORPLAN, including timber yield tables that assume trees grow like crazy.

O’Toole is author of the 1988 book Reforming the Forest Service. He’s a Cato Institute expert on transportation and critic of oxymoronic “smart growth.”

Thanks, Andy!

Modeling of complex systems to serve ulterior motives such as neoliberalism and libertarianism, etc., like all good propaganda, must employ some kernels of Truth. Unfortunately, both lack the ethical bases necessary for us to survive those skewed worldviews.

That the necessity of the precautionary principle remains absent in all planning of consequence, is a testament to the protracted state of profound hubris and denialism that has brought the Doomsday Clock to within 2 minutes to Anthropogenic apocalypse.

In my experience, reliance on model results as absolute information rather than “primarily explanatory…for insight” has been the problem. Andy points to FORPLAN and its fragility in predicting results of land management (“you can cut all this volume and protect the environment also!”). For me, the false expectation with FORPLAN modeling is exhibited with the Allowable Sale Quantity calculation. Unfortunately for the Forest Service, it never invested appropriately in monitoring (and evaluation!) to determine whether the modeling predictions even had a shred of reality to them. Politically, it did not matter.

You know, Tony, we can be critical of the FS not monitoring and evaluating (and perhaps should be) but other modelers don’t necessarily monitor and evaluate either. Even journalists, for example, don’t monitor whether their dire predictions of say, Trump and science, ever came true. Exceptions to this general rule would be interesting.

Fair enough. I was focusing on the authors’ comments that not validating models with real-life experience further weakens the model’s ability to provide meaningful information. What is it they say about unreliable models or input data? “Crap in, crap out”?

If validating predictions is indeed the exception and not the rule, I am amazed how we all have even got this far in our civilization. “Luck” is more of a factor than any informed choice to reach success?

Yes, you raise an important distinction. People evaluate “that Forplan didn’t hold up” or “those trees didn’t grow the way the model said” or “the average global temperature is not what models predicted” but it’s not a formal monitoring and evaluation methodology. Probably the people who really care are paying attention.

Post decision/action formalizations do seem rare, but some folks do them. I think of Fire after-actions, and I think for ESA there might be annual monitoring reports. Also BMP evaluation report by state foresters.

However, the point is that not enough of post-action evaluation is occurring. I agree that the firefighting organization is probably the most consistent in the Forest Service in performing this. Just think how much less modeling would occur if the agency were able to assimilate a vast inventory of information.

The conversation here is remarkably akin to passengers arguing over modeling proving the claim of “unsinkable” on the tilting deck of the Titanic as Sharon’s “Sea of Self-Delusions” rises on entire continents:

https://www.nytimes.com/interactive/2019/10/29/climate/coastal-cities-underwater.html

“Rising Seas Will Erase More Cities by 2050, New Research Shows”

By Denise Lu and Christopher FlavelleOct. 29, 2019

Rising seas could affect three times more people by 2050 than previously thought, according to new research, threatening to all but erase some of the world’s great coastal cities.

“The authors of a paper published Tuesday developed a more accurate way of calculating land elevation based on satellite readings, a standard way of estimating the effects of sea level rise over large areas, and found that the previous numbers were far too optimistic. The new research shows that some 150 million people are now living on land that will be below the high-tide line by midcentury.”